The Pain of Early Adoption

My colleague Pedro Reys shared a great article from Gavin Vickery today, a 1-year retrospective of using NodeJS in production. You should go read it. Now.

Aside from the technical lessons learnt, Gavin’s overall experiment is a great example of the problems early adopters typically face: a small ecosystem, buggy & unfinished features, missing documentation, lost productivity, and most importantly, a significant risk of failure. The article justified (in my head) a little rule I try to remember: avoid being an early adopter unless absolutely necessary.

The reason I have to remind myself is that lately, calling yourself an early adopter has become cool. You know that friend who has to buy the latest iDevice or 4K television, and then humblebrag: “I’ll admit, I’m an early adopter :)” (swagger swagger).

I blame Apple. Their Version 1 of the iPhone, iPad and Watch were so brilliant, so polished and appealing that the usual risks of early adoption didn’t really apply. Consumers got the product benefits that early-majority users normally get, while getting the ego boost of being ahead of the crowd.

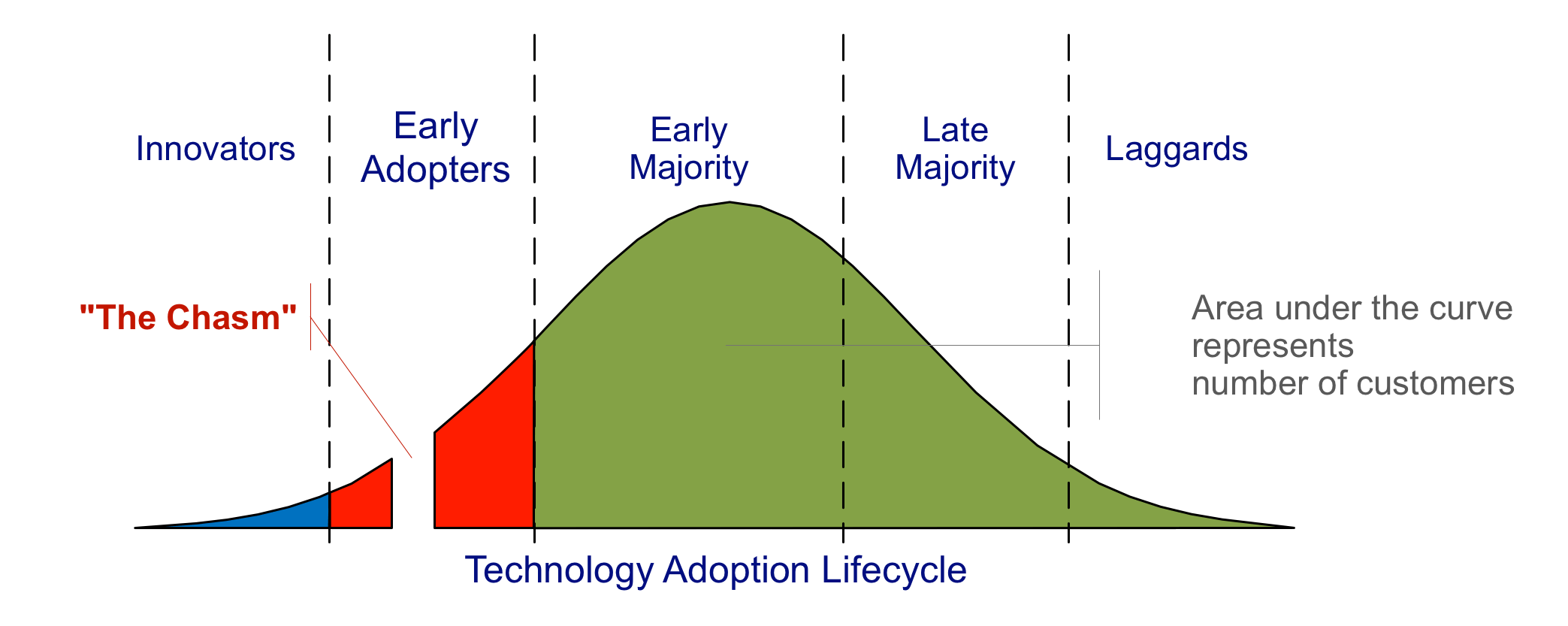

That’s not typically the case though, which is why on the technology adoption lifecycle I sit happily (and also smugly, sometimes) in the middle of the bell curve:

And I get significant benefits too. Saved time, effort and money, avoiding the consumerist feeding frenzy to make a more rational decision, and in the end (if I do buy) a better version of the product/sevice in question.

In my consulting career I’ve come across 2 significant incidents where the team chose the shiny new technology stack despite tight deadlines, and put the project at significant risk. One time we implemented a (then) semi-baked mobile framework on a Mobile CRM app on a 6-month timeline. If it wasn’t for the herculean efforts of the tech lead and the lead engineer, the project would’ve been an utter failure. Another time we agreed with the client to use NodeJS as the back end, but also agreed to a fixed deadline. It was madness.

So the next time the choice of being an early adopter presents itself, dear reader, please evaluate carefully: is the cost worth the benefit?